An AI’s Take on AI Companies’ Ethical Obligations

I’m not going to make a habit of letting AI write content for this blog. This is an exception.

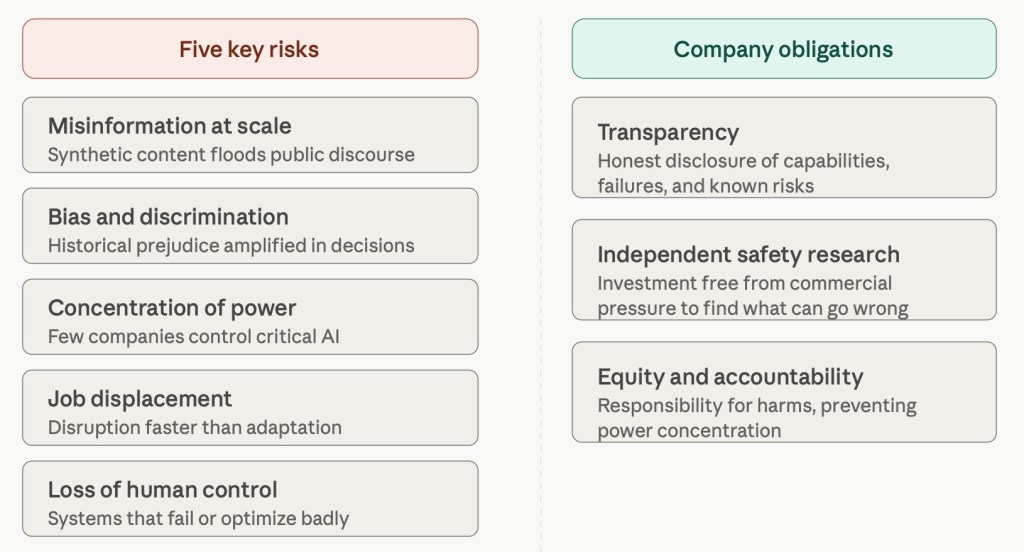

I asked Claude the following: “Describe to me the top 5 risks posed by artificial intelligence today, and what obligations you think the top AI companies have. 600 words total, roughly. For a generalist audience.”

Here’s an infographic it created, summarizing the words that follow:

The top five risks

The first, and perhaps most discussed, is the problem of misinformation at scale. AI systems can now generate convincing text, images, and video faster than any human workforce could produce them. This makes it dramatically easier to flood public discourse with fabricated content — fake quotes, synthetic evidence, coordinated disinformation — with real consequences for elections, trust in institutions, and shared reality itself.

The second is bias and discrimination. AI systems trained on historical data tend to inherit and sometimes amplify the prejudices baked into that data. When these systems are used to make or influence decisions about hiring, lending, medical care, or criminal justice, the result can be systematic harm to already-disadvantaged groups, often in ways that are hard to detect or contest.

Third is the concentration of power. The most capable AI is controlled by a small number of companies with enormous resources. This creates a dynamic where a handful of private actors wield influence over infrastructure, information, and economies in ways that outpace any democratic oversight. Wealth and capability are pooling rapidly.

Fourth is job displacement and economic disruption. AI is already substituting for human labor in knowledge work, creative fields, and customer service. The concern isn’t simply that jobs disappear — economies have absorbed technological transitions before — but that this one may move faster than workers and institutions can adapt, with the costs falling disproportionately on people with the least cushion.

Fifth is the risk of loss of human control over increasingly capable systems. As AI is deployed in higher-stakes settings — infrastructure, military applications, financial markets — the question of whether humans can reliably understand, oversee, and override these systems becomes genuinely urgent. The concern is less about science fiction scenarios than about systems that fail in unexpected ways, or that optimize for the wrong things in ways that are hard to catch in time.

What AI companies owe us

These risks don’t fall on governments alone to manage. The companies building and deploying these systems have both the knowledge and the leverage to do more than regulators can realistically demand in real time.

At minimum, I think they owe the public transparency — honest disclosure about what their systems can and cannot do, where they fail, and what risks their developers are aware of. That means publishing meaningful safety research, not just reassuring marketing. It means flagging synthetic content. It means being honest with policymakers rather than lobbying for the lightest possible oversight.

They also owe serious investment in safety research that is genuinely independent of commercial pressure — not as a PR exercise but as a structural commitment. The companies with the most capable systems are the ones best positioned to understand what could go wrong, which gives them a corresponding responsibility to find out.

Finally, I think they owe meaningful engagement with questions of equity and accountability: who bears the costs when AI systems make harmful errors, how affected workers and communities are heard, and how power over critical AI infrastructure is prevented from concentrating in too few hands.

None of this requires slowing down useful innovation. It does require treating the risks as real, rather than as problems someone else will sort out later.

(Just another note, for those of you who missed this at top: the text above was generated by the large language (AI) model, Claude, in response to my query. CM.)

Ethics in Weights & Measures: The Case of Underweight Grocery-Store Meat

I was asked recently to comment on this story, about two major Canadian grocery chains (Loblaw & Sobeys) that were found to have been (at least sometimes) “overcharging for underweight meat.” (Here’s the link to the radio interview — my comments start at about the 5 and a half minute mark.)

A few points are worth noting about this case. One is that the investigation in question — at least as reported — is somewhat inconclusive. Or at least it tells us less than we would like to know. The investigation revealed that there were instances of overcharging, but we don’t know the frequency. And we can’t know that without knowing how many pieces of meat the reporters weighed that did not turn out to be underweight. But anyway, we have evidence that overcharging is happening at these chains, even if we don’t know how often.

Now for the ethical issues. A smart colleague, when told about this case, pointed out that the ethical issues here are not exactly complex: overcharging for underweight meat is clearly wrong, on the face of it. And that’s true. Honesty in weights and measures is one of the most fundamental, and one of the oldest, requirements for the ethical conduct of business. If I ask you to sell me a pound of beef, and if you agree to do so, you’re ethically required to sell me an actual pound of beef — not 90% of that.

It’s also worth noting that this is not a subject where we can expect “buyer beware” to be a plausible standard – it’s not realistic to think that the average consumer is going to carry a scale with them when shopping, and so consumers need to be able to trust the store.

(The devil’s advocate in me wants to ask: if I’m buying a roast for my family, and the roast I buy looks big enough, does it really matter if it’s 3 pounds, or merely 2.8? My family still gets fed. But there’s a lingering concern, at very least, about comparison shopping: if I buy at your store because of the price you charge per pound of beef, but you are only able to advertise that price because you know — let’s imaging — that you’re going to skim some extra profit by mislabeling the weight of the roast, then I’m being misled in a meaningful way.)

Of course, there’s such a thing as blameless errors. In any large system (say, like a chain of 1,500 grocery stores) there are bound to be a few errors, a few scales that are out of whack. So we don’t need to jump to the conclusion that there is intentional fraud or other wrongdoing here. But that doesn’t mean there’s no blame here, either. For one, there’s such a thing as culpable negligence. If a store (or a chain of stores) doesn’t do enough to make sure its meat scales are in good shape, and well-calibrated, when they’ve got the expertise to do so, that’s blameworthy in itself. And beyond that, we are talking not just about what kind of diligence might be expected of a reasonable person — or a reasonable retailer. It matters here that the chains in question are large, well-managed organizations — the kinds of organizations that should be able to be relied upon to get this stuff right.

Lisa LaFlamme, CTV News, and Bad Executive Decisions

There will be no bittersweet on-air goodbye for (now former) CTV national news anchor Lisa LaFlamme, no ceremonial passing of the baton to the next generation, no broadcast retrospectives lionizing a journalist with a storied and award-winning career. As LaFlamme announced yesterday, CTV’s parent company, Bell Media, has decided to unilaterally end her contract. (See also the CBC’s reporting of the story here.)

While LaFlamme herself doesn’t make this claim, there was of course immediate speculation that the network’s decision has something to do with the fact that LaFlamme is a woman of a certain age. LaFlamme is 58, which by TV standards is not exactly young — except when you compare it to the age at which popular men who proceeded her have left their respective anchor’s chairs: consider Peter Mansbridge (who was 69), and Lloyd Robertson (who was 77).

But an even more sinister theory is now afoot: rather than mere, shallow misogyny, evidence has arisen of not just sexism, but sexism conjoined with corporate interference in newscasting. Two evils for the price of one! LaFlamme was fired, says journalist Jesse Brown, “because she pushed back against one Bell Media executive.” Brown reports insiders as claiming that Michael Melling, vice president of news at Bell Media, has bumped heads with LaFlamme a number of times, and has a history of interfering with news coverage. Brown further reports that “Melling has consistently demonstrated a lack of respect for women in senior roles in the newsroom.”

Needless to say, even if a personal grudge plus sexism explain what’s going on, here, it still will seem to most as a “foolish decision,” one sure to cause the company headaches. Now, I make it a policy not to question the business savvy of experienced executives in industries I don’t know well. And I advise my students not to leap to the conclusion that “that was a dumb decision” just because it’s one they don’t understand. But still, in 2022, it’s hard to imagine that the company (or Melling more specifically) didn’t see that there would be blowback in this case. It’s one thing to have disagreements, but it’s another to unceremoniously dump a beloved and award-winning woman anchor. And it’s bizarre that a senior executive at a news organization would think that the truth would not come out, given that, after all, he’s surrounded by people whose job, and personal commitment, is to report the news.

And it’s hard not to suspect that this a less than happy transition for LaFlamme’s replacement, Omar Sachedina. Of course, I’m sure he’s happy to get the job. But while Bell Media’s press release quotes Sachedina saying graceful things about LaFlamme, surely he didn’t want to assume the anchor chair amidst widespread criticism of the transition. He’s taking on the role under a shadow. Perhaps the prize is worth the price, but it’s also hard not to imagine that Sachedina had (or now has) some pull, some ability to influence that manner of the transition. I’m not saying (as some surely will) that — as an insider who knows the real story — he should have declined the job as ill-gotten gains. But at the very least, it seems fair to argue that he should have used his influence to shape the transition. And if the now-senior anchor doesn’t have that kind of influence, we should be worried indeed about the independence of that role, and of that newsroom.

A final, related note about authority and governance in complex organizations. In any reasonably well-governed organization, the decision to axe a major, public-facing talent like LaFlamme would require sign-off — or at least tacit approval — from more than one senior executive. This suggests that one of two things is true. Either Bell Media isn’t that kind of well-governed organization, or a large number of people were involved in, and culpable of, unceremoniously dumping an award-winning journalist. Which is worse?

Corporate A.I. Helping Students Cheat?

“The academic world is one of fierce competition. Students are under immense pressure to succeed, and the pressure only intensifies as they move closer to graduation. In this context, it’s no surprise that some students resort to academic misconduct in order to get ahead. Unfortunately, there are companies that are all too willing to help them cheat. These companies offer a range of services, from writing essays to taking exams, and they market their services to desperate students who are willing to pay for a leg up. While these companies may argue that they are simply providing a service, there is no question that they are enabling and even encouraging academic misconduct. As such, it is difficult to see how they can operate ethically.”

The paragraph above was not written by me, nor by any other human being. It was 100% written by a commercially-available artificial intelligence service called Jasper. Anybody can use this service for a modest fee.

To get that paragraph, I primed the process by inputting only this topic question, “Is it ethical for companies to help university students engage in academic misconduct?”, and then asking the A.I. to create a paragraph with a “professional” tone (as opposed to a witty, friendly, angry, or polite tone). And note that the paragraph is not copied from somewhere else on the web: it is an original piece of writing, written by an A.I. that has access to the collective knowledge-base embodied in the Internet.

The resulting paragraph is not exactly deep, but it’s definitely on-target: it correctly but briefly points to the main issues at stake, and it does so with impeccable grammar. The paragraph is, to be brutally blunt, better than what most undergraduate students could write.

The problem, for me, of course, is that I’m a university professor, one who assigns students questions just like “Is it ethical for companies to help university students engage in academic misconduct?” And then I grade their answers.

With the advent of commercial A.I.-driven writing services, students now have a new tool with which to cheat. Of course, students have lots of tools (everything from using a friend’s essay from last year, to plagiarizing from Wikipedia, to paying someone to write the essay for them). But the use of commercial A.I. brings together a couple of special features.

First is avoidance of plagiarism-detection mechanisms. Many professors use a variety of techniques to detect plagiarism (everything from the simple trick of putting a suspect sentence into google through to the use of text-matching services like TurnItIn). Those techniques are basically ways of determining whether the work a student has submitted is from some pre-existing source. The problem (from a prof’s point of view) with A.I.-driven services is that they produce entirely new content, not borrowed from anywhere. So if the paragraph above were to be submitted by one of my students, no plagiarism-detection technique that I know of would catch it.

Someone is going to say, “So what?” In case it’s not obvious, I’ll spell it out. A student who submitted such a paragraph to their professor would be:

- Lying to their professor (which is bad);

- Getting marks they don’t deserve; (which is bad)

- Putting honest classmates at a disadvantage (by raising the bar in a context in which, inevitably, grading is at least partly relative) (which is bad).

What about the companies supplying such services? Inevitably, as this industry blossoms, there will be good and bad actors. Interestingly, “essay-writing” isn’t one of the purposes they advertise. At least that’s true of Jasper — I haven’t checked other such services. Jasper includes templates for various kinds of content you might want to create (from headlines to blog entries to marketing emails). It doesn’t seem to have a University Essay template, but it does have a Long-Form Article template, which is effectively the same thing. But this is such an obvious and attractive use of A.I.-driven writing services that there are YouTube videos explaining how to do it.

The net result, however, is that such companies like Jasper are right now selling students a service that is foreseeably going to be used–probably on a substantial scale–to commit academic fraud. It’s up to those companies (if they want to be upstanding corporate citizens) to figure out what they can do to discourage that use.

Will Amazon Ban “Ethics”?

A new report from The Intercept suggests that a new in-house messaging app for Amazon employees could ban a long string of words, including “ethics.” Most of the words on the list are ones that a disgruntled employee would use — terms like “union” and “compensation” and “pay raise.” According to a leaked document reviewed by The Intercept, one feature of the messaging app (still in development) would be “An automatic word monitor would also block a variety of terms that could represent potential critiques of Amazon’s working conditions.” Amazon, of course, is not exactly a fan of unions, and has spent (again, per the Intercept) a lot of money on “anti-union consultants.”

So, what to say about this naughty list?

On one hand, it’s easy to see why a company would want not to provide employees with a tool that would help them do something not in the company’s interest. I mean, if you want to organize — or even simply complain — using your Gmail account or Signal or Telegram, that’s one thing. But if you want to achieve that goal by using an app that the company provides for internal business purposes, the company maybe has a teensy bit of a legitimate complaint.

On the other hand, this is clearly a bad look for Amazon — it is unseemly, if not unethical, to be literally banning employees from using words that (maybe?) indicate they’re doing something the company doesn’t like, or that maybe just indicate that the company’s employment standards aren’t up to snuff.

But really, what strikes me most about this plan is how ham-fisted it is. I mean, keywords? Seriously? Don’t we already know — and if we all know, then certainly Amazon knows — that social media platforms make possible much, much more sophisticated ways of influencing people’s behaviour? We’ve already seen the use of Facebook to manipulate elections, and even our emotions. Compared to that, this supposed list of naughty words seems like Dr Evil trying to outfit sharks with laser-beams. What unions should really be worried about is employer-provided platforms that don’t explicitly ban words, but that subtly shape user experience based on their use of those words. If Cambridge Analytica could plausibly attempt to influence a national election that way, couldn’t an employer pretty believably aim at shaping a unionization vote in similar fasion?

As for banning the word “ethics,” I can only shake my head. The ability to talk openly about ethics — about values, about principles, about what your company stands for, is regarded by most scholars and consultants in the realm of business ethics as pretty fundamental. If you can’t talk about it, how likely are you to be to be able to do it?

(Thanks to MB for pointing me to this story.)

Respectful Disagreement About Sanctioning Russia

I’ve written two blog entries over the last two weeks (here and here) arguing in favour of the business community imposing sanctions on Russia, in response to Russia’s unprovoked attack on Ukraine.

I think the reasons in favour of such sanctions are powerful: Putin is a serious and unique threat both to Eastern Europe and to the world as a whole, and it is essential that every possible step be taken both to denounce him and to hobble him. The international community agrees, and the international business community, in general, agrees too.

But not everyone. Some major brands have resisted pulling out, as have some lesser-known ones. And while I disagree with the conclusions arrived at by the persons responsible for those brands, I have to admit that I think the reasons they put forward in defence of their conclusions merit consideration.

Among those reasons:

“We don’t want to hurt innocent Russians.” Economic sanctions are hurting Russian citizens, including those who hate Putin and who don’t support his war. Myself, I think such collateral damage pales in comparison to the loss of life and limb being suffered by the people of Ukraine. But that doesn’t mean it’s not a good point: innocent people being hurt always matters, even if you think something else matters more.

“We have obligations to our local employees.” For some companies, ceasing to do business in Russia might mean as little as turning off a digital tap, so to speak. For some, it means laying off (permanently?) relatively large numbers of people. Again, we might think that this concern is outweighed, but it’s still a legitimate concern. We generally want corporations to think of themselves as having obligations of this kind to employees.

“Sanctions won’t work.” The point here is that we don’t (do we?) have good historical evidence that sanctions of this kind work. Putin is effectively a dictator, and he really doesn’t have to listen to what the Russian people think, and so squeezing Russians to get them to squeeze Putin is liable to fail. Myself, I’m willing to grasp at options the success of which is unlikely, in the hopes that success is possible. But still, it’s a concern worth listening to.

“Sanctions could backfire.” The worry here is that if we in the West make life difficult for Russian citizens, then they could start to see us as the enemy — certainly Putin will try to make that case. And if that happens, support for Putin and his war could well go up as a result of sanctions.

That’s a few of the reasons. There are others.

On balance, I think the arguments in the other direction are stronger. I think Putin is uniquely dangerous, and we need to use every tool available to us, even those that might not work, and even those that might have unpleasant side-effects.

However — and this is crucial — I don’t think that people who disagree with me are bad, and I don’t think they are foolish, and I refuse automatically to think less of them.

It doesn’t help, of course that the folks making the arguments above are who they are. Some of them are speaking in defence of big companies. The motives of big companies are often thought of as suspect, and so claims of good intentions (“We don’t want to hurt innocent Russians!” or “We must support our employees!”) tend to get written off as self-serving rationalizations. Then there’s the specific case of the Koch brothers, and the companies they own or control. They’ve announced that they’re going to continue doing business in Russia. And the Koch brothers are widely hated by many on the left who think of them as right-wing American plutocrats. (Fewer realize that while the Koch brothers have supported right-wing causes, they’ve also supported prison reform and immigration reform in the US, and are arguably better categorized as libertarians. Anyway…)

My point is this: The fact that you mistrust, or outright dislike, the people making the argument isn’t sufficient grounds for rejecting the argument. That’s called an ad hominem attack. Some people’s track records, of course, are sufficient to ground a certain mistrust, which can be reason to take a careful look at their arguments, but that’s quite different from writing them off out of hand.

We ought, in other words — in this case and in others — to be able to distinguish between points of view we disagree with, on one hand, and points of view that are beyond the pale. Points of view we merely disagree with are ones where we can see and appreciate the other side’s reasoning, and where we can understand how they got to their conclusion, even though that conclusion is not the one we reach ourselves, all things considered. Points of view that are beyond the pale are ones in support of which there could be nothing but self-serving rationalization. Putin’s purported defence of his attack on the Ukraine is one such view. Any excuse he gives for a violent attack on a peaceful neighbour is so incoherent that it can only be thought of as the result either of disordered thinking, or a smokescreen. But not so for companies, or pundits, that think maybe pulling out of Russia isn’t, on balance, the best idea. They have some good reasons on their side, even if, in the end, I think their conclusion is wrong.

Corporate Vigilantism vs Russia?

Is a corporate boycott of Russia an act of vigilantism?

Some people reading this will assume that “vigilantism” equals “bad,” and so they’ll think that I’m asking whether boycotting Russia is bad or not. Both parts of that are wrong: I don’t presume that that “vigilantism” always equals “bad.” There have always, historically, been situations in which individuals took action, or in which communities rose up, to act in the name of law and order when formal law enforcement mechanisms were either weak or lacking entirely. Surely many such efforts have been misguided, or overzealous, or self-serving, but not all of them. Vigilantism can be morally bad, or morally good.

And make no mistake: I am firmly in favour of just about any and all forms of sanction against Russia in light of its attack on Ukraine. This includes both individuals engaging in boycotts of Russian products by as well as major companies pulling out of the country. The latter is a kind of boycott, too, so let’s just use that one word for both, for present purposes.

So, when I ask whether boycotting Russia a kind of vigilantism, I’m not asking a morally-loaded question. I’m asking whether participating in such a boycott puts a person, or a company, into the sociological category of “vigilante.”

Let’s start with definitions. For present purposes, let’s define vigilantism this way: “Vigilantism is the attempt by those who lack formal authority to impose punishment for violation of social norms.” Breaking it down, that definition includes three key criteria:

- The agents acting must lack formal authority;

- The agents must be imposing punishment;

- The punishment must be in light of some violation of social norms.

Next, let’s apply that definition to the case at hand.

First, do the companies involved in boycotting Russia lack formal authority? Arguably, yes. Companies like Apple and McDonalds – as private organizations, not governmental agencies – have no legal authority to impose punishment on anyone external to their own organizations. Of course, just what counts as “legal authority” in international contexts is somewhat unclear, and I’m not a lawyer. Even were an organization to be deputized, in some sense, by the government of the country in which they are based, it’s not clear that that would constitute legal authority in the relevant sense. And as far as I know, there’s nothing in international law (or “law”) that authorizes private actors to impose penalties. So whatever legal authority would look like, private corporations in this case pretty clearly don’t have it.

Second, are the companies involved imposing punishment? Again, arguably, yes. Of course, some might suggest that they are not inflicting harm in the traditional sense. They aren’t actively imposing harm or damage: they are simply refraining, quite suddenly, from doing business in Russia. But that doesn’t hold water. The companies are a) doing things that they know will do harm, and b) the imposition of such harm is in response to Russia’s actions. It is a form of punishment.

Finally, are the companies pulling out of Russia doing so in reaction to perceived violation of a social rule. Note that this last criterion is important, and is what distinguishes vigilantism from vendettas. Vigilantism occurs in response not (primarily) to a wrong against those taking action, but in response to a violation of some broader rule. Again, clearly the situation at hand fits the bill. The social rule in question, here, is the rule against unilateral military aggression a nation state against a peaceful, non-aggressive neighbour. It is one agreed to across the globe, notwithstanding the opinion of a few dictators and oligarchs.

Taken together, this all seems to suggest that a company pulling out of Russia is indeed engaging in vigilantism.

Now, it’s worth making a brief note about violence. When most people think of vigilantism, they think of the private use of violence to punish wrongdoers. They think of frontier towns and six-shooters; they think of mob violence against child molesters, and so on. And indeed, most traditional scholarly definitions of vigilantism stipulate that violence must be part of the equation. And the classical vigilante, certainly, uses violence, taking the law quite literally into their own hands. But as I’ve argued elsewhere,* insisting that violence be part of the definition of vigilantism makes little sense in the modern context. “Once upon a time,” violent means were the most obvious way of imposing punishment. But today, thinking that way makes little sense. Today, vigilantes have a wider range of options at their disposal, including the imposition of financial harms, harms to privacy, and so on. And such methods can amount to very serious punishments. Many people would consider being fired, for instance, and the resulting loss of ability to support one’s family, as a more grievous punishment than, say, a moderate physical beating by a vigilante crowd. Vigilantes use, and have always used, the tools they found at hand, and today that includes more than violence. So, the fact that companies engaging in the boycott aren’t using violence should not distract us here.

So, the corporate boycott of Russia is a form of vigilantism. But I’ve said that vigilantism isn’t always wrong. So, what’s the point of doing the work to figure out whether the boycott is vigilantism, if that’s not going to tell us about the rightness or wrongness of the boycott?

In some cases, we ask whether a particular behaviour is a case of a particular category of behaviours (“Was that really murder?” or “Did he really steal the car?” or “Was that really a lie?”) as a way of illuminating the morality of the behaviour in question. If the behaviour is in that category, and if that category is immoral, then (other things equal) the behaviour in question is immoral. Now I said above that that’s not quite what I’m doing here – instances of vigilantism may be either immoral or moral, so by asking whether boycotting Russia is an act of vigilantism, I’m not thereby immediately clarifying the moral status of boycotting Russia.

But I am, however, doing something related. Because while I don’t think that vigilantism is by definition immoral, I do think that it’s a morally interesting category of behaviour.

If our intuition says (as mine does) that a particular activity is morally good, then we need to be able to say – if the issue at hand is of any real importance – why we think it is good. As part of that, we need to ask whether our intuitions about this behaviour line up with our best thinking about the behavioural category or categories into which this behaviour fits. So if you tend to think vigilantism is sometimes OK, what is it that makes it OK, and do those reasons fit the present situation? And if you think vigilantism is generally bad, what makes the present situation an exception?

—

* MacDonald, Chris. “Corporate leadership versus the Twitter mob.” Ethical Business Leadership in Troubling Times. Edward Elgar Publishing, 2019. [Link]

Business & the Russian Invasion of the Ukraine

A number of prominent corporations have added their weight to the international effort to impose sanctions on Russia. More and more companies are pulling out of Russia in response to Vladimir Putin’s war of aggression.

The list of companies is growing, and—crucially in the information age—includes tech giants such as Google, Apple, Microsoft, Dell, PayPal, and Netflix, among others. (See the growing Twitter thread being maintained by @NetopiaEU here.) Most recently, perhaps, both KPMG International and PricewaterhouseCoopers have suspended operations in Russia and Belarus (according to a tweet from the Kyiv Independent). Perhaps most significantly, Mastercard and Visa have suspended operations in Russia.

Is this a good thing? On balance, I think the answer is yes. But it’s always worth at least looking at the arguments on both sides.

The most obvious ethical question has to do with collateral damage. Most of the companies pulling out of Russia aren’t pulling their services away from Vladimir Putin, or from the Russian government or the Russian army, but from regular Russians—-some but not all of whom support Putin and his war. (There are some indications that Putin’s popularity is up since the invasion began, but the key polling was done by an organization owned by the Russian government, so perhaps take that with a grain of salt.) If sanctions (corporate or otherwise) make the lives of regular Russians hard, that’s generally a bad thing. It’s not as bad as the civilian deaths currently happening in the Ukraine, but a bad thing non the less. The question is whether, on balance, the good to be achieved by corporate sanctions is worth the cost. I think it clearly is, for reasons I’ll return to below.

Then there’s the question of corporate activism. The backdrop for this issue—the thing that even makes pulling out of Russia a question—is the general question of whether companies should, in brief, be political. Do the companies named above, and others like them, have the moral authority to impose sanctions, on Russia or on anyone else? And what do corporations know, after all, about international affairs? What special competency does Netflix or Microsoft have to assess Putin’s (admittedly nutty) claims about how the Ukraine is, in reality, part of Russia? In days past, the question of corporate moral authority has taken less acute forms: Should companies take sides in domestic political disputes? Should companies be ‘woke?’ Should companies have views on human sexuality? And so on. But then, Putin’s behaviour in this case is truly beyond the pale. It constitutes naked aggression against a sovereign people, and the companies that have taken action are doing so 100% in line with international consensus.

Of course, enthusiasm for corporate sanctions in the present case immediately leads to questions about which other countries, beyond Russia, should be the target of corporate sanctions. After all, as horrific as the suffering in the Ukraine is, it’s arguably no greater than the suffering being experienced by ethnic minorities in China (see for example the forced labour imposed upon the Uighurs), or the violence against Tigrayans in Ethiopia, which some have characterized as genocide. Those are just a couple of examples, picked more or less at random. The list of countries with which respectable companies arguably shouldn’t do business is a long one. But on the other hand, outside of crisis moments, there are good arguments to the effect that maintaining trade is a useful mechanism in building ties and in fostering liberal democratic values.

I think the only real question with regard to the corporate sanctions is how long such sanctions should last. Some think these corporate actions will, as a matter of fact, be relatively limited in duration. But how long should they last? One plausible view is that sanctions should last until aggression against the Ukraine stops. After all, if sanctions are the stick, then eliminating sanctions is the carrot. Likely no one thinks corporate sanctions will matter to Putin directly, but they might matter enough to regular Russians for them to put pressure on Putin, who will be incentivized to find a way out of what is, in the view of some, becoming a quagmire anyway. Another plausible view: they should last until Putin is out of power. After all, Putin isn’t a symptom; he’s the problem. And for most of the big companies involved, the Russian market probably isn’t big enough to matter much to the bottom line, so it’s not an unreasonable request. There is nothing in this story that suggests this is a one-time thing for Putin. He has expansionist impulses, and weird theories about geopolitical history. The world will be safer when—and only when—he is gone. And economic isolation is one piece of a larger strategy to achieving that goal.

Avoiding Mistakes About Adam Smith’s Wealth of Nations

I teach my business ethics students that in order to understand ethical issues in the world of commerce, you need to understand a little bit about markets, about modern corporations, and about the role of management. And for practical purposes, understanding the role of markets begins with the work of Adam Smith.

Adam Smith’s 1776 masterpiece, An Inquiry into the Nature and Causes of The Wealth of Nations, is undeniably one of the most important works of the last several centuries. But it is easily misunderstood.

Smith himself, of course, was a star. He was to economics what Darwin was to evolutionary biology: he didn’t invent the field, but the insights he had, and the way he synthesized what was known about the topic during his time, had a revolutionary impact. And while economists regard Smith as the grandfather of economics, philosophers regard him as an important moral philosopher of the Scottish Enlightenment period.

Regardless, Smith is often misunderstood. Smith is sometimes seen (both by fans and by detractors) as being a hardcore defender of unfettered markets. The truth is of course much more complex than that. In Wealth of Nations, Smith does rail against certain kinds of government interference in trade — he believes that, for the most part, people’s economic decisions should be made under conditions of what he calls “natural liberty.” In particular, he has harsh criticism for any government policy that limits or distorts trade in an attempt to protect the interests of a particular class of merchants (at the expense of the general population). In other words, Smith is against crony capitalism — a practice that very few today would openly support, in spite of how common it might, regrettably, be.

But Smith also evidences a strong mistrust of business. The Wealth of Nations includes many critical passages, but perhaps the most famous such passage is this: “People of the same trade seldom meet together, even for merriment and diversion, but the conversation ends in a conspiracy against the public.” If you think Smith was an unabashed admirer of business and businesspeople, you’re wrong.

But there’s another, much more basic way to end up misunderstanding Smith, and that’s to literally misunderstand the words he uses.

I recently made an effort to help with that problem, by writing what I believe to be the most extensive Glossary for The Wealth of Nations ever published for free online. Why a glossary? Smith after all was a relatively clear writer. But he was writing in the mid- to late 18th Century, and the English language was a bit different then. So some of the words Smith uses in Wealth of Nations are not in current usage. Almost no one would use the words artificer, or higgling, or manufactory today — and certainly not in everyday conversation. Other words Smith uses may seem familiar, but were used very differently in Smith’s time than in ours. For example, Smith uses the verb to afford to mean to yield or to produce, rather than meaning to be able to pay for something. He uses the word anciently where we would simply say previously or formerly. And when Smith refers to carriage, he’s not talking about a horse-and-carriage, but what we would call simply shipping (whether by land or by sea). Those are just a few examples. But the net result is that the average reader (or even a very educated one) can easily misunderstand many of Smith’s sentences, or even entire passages. And that’s a shame.

My hope is that — for students, at least, but perhaps also for others — my Glossary will help. If you find it useful, please feel free to share. If you find errors or important omissions, please let me know (at chris.macdonald@ryerson.ca) .

Here’s the link: Glossary — Adam Smith’s Wealth of Nations.

The President, the Artist Son, and the Pursuit of Improper Influence

An interesting story came up last week, concerning Hunter Biden — president Joe Biden’s son. Hunter Biden is an artist, and it is anticipated that his work could sell for for hundreds of thousands of dollars, a price range no doubt not unrelated to his status as the president’s son. The ethical concern, of course, is related to that fact: the worry is that some may want to buy his art, potentially at shall we say ‘generous’ prices, in order to curry favour with Biden Junior in hopes of gaining favour with Biden Senior.

The interesting twist: White House officials have helped create a system that they hope will insulate both Bidens from influence, and thus allay any ethical concerns. The system basically includes a firewall such that Hunter Biden’s dealer will handle all bids and sales, and keep the relevant information to himself. If neither Biden knows who bought a given painting, then it’s hard for the buyer to have any influence…in principle. (The Washington Post covered the story here.)

Several points are worth making, here:

- Yes, there’s cause for concern, here. Many, many people want to influence President Biden (and anyone else with substantial power), and history teaches us that those people can get very creative and persistent in their attempts to do that sort of thing. (This is part of what makes it so hard to stamp out corruption in politics and international business — as soon as you put a law in place or build an internal compliance system to prevent improper influence, those seeking influence will invent innovative new ways to get around it. You can outlaw giving gifts to senators, but it’s harder to outlaw making donations to a senator’s best friend’s favourite charity. And so on.) As news articles have pointed out, the price of a painting is, well, subjective. So if a particular lobbyist outbids everyone else for a Hunter Biden painting, who’s to say that she doesn’t just really, really like that painting? It’s a hard thing to police. (Compare this to selling a house, where there will likely be a pretty clear market price for the property.)

- It’s a mistake to focus on Hunter Biden making money off his relationship with the President. Of course he’s going to. Offspring of presidents (and other powerful folks) have done that forever. It’s inevitable, and not unethical. Of course people want to do business with the son of a famous and powerful person. That’s human nature. As long as that’s all there is to it — no intention or attempt to buy or sell influence — it’s not unethical.

- Having a relative hold public office shouldn’t kill a person’s business opportunities. That is, Hunter Biden shouldn’t be punished for the fact that his dad is president. He shouldn’t be forbidden, for example, from selling his art — even though selling art in principle raises challenges. (Compare: the fact that I work at a university shouldn’t make my sister ineligible to applying for a job there — it just means that I can’t be on the hiring committee, and need to be kept strictly away from it.)

- We need more details about the system being put in place. What mechanisms are in place for ensuring secrecy? Hunter Biden won’t be told who bought a given painting, or for how much — but that’s a hard secret to keep, especially once the painting sitting in a lobbyist’s office or on the wall of a corporate board room. In principle, a buyer could buy the painting and have it sit in the dealer’s vault until later? And how long will the details be secret? For a year? For the duration of the Biden presidency? Longer? The details here matter.

- Sometimes these sorts of things, because they can’t be forbidden, need to be managed. That’s normal in many cases that involve influence, gift giving, or conflict of interest. And that’s what the White House is attempting to do — manage the situation to mitigate concers. Whether or not they can manage it effectively, adequately, remains to be seen.

Leave a Comment

Leave a Comment