An AI’s Take on AI Companies’ Ethical Obligations

I’m not going to make a habit of letting AI write content for this blog. This is an exception.

I asked Claude the following: “Describe to me the top 5 risks posed by artificial intelligence today, and what obligations you think the top AI companies have. 600 words total, roughly. For a generalist audience.”

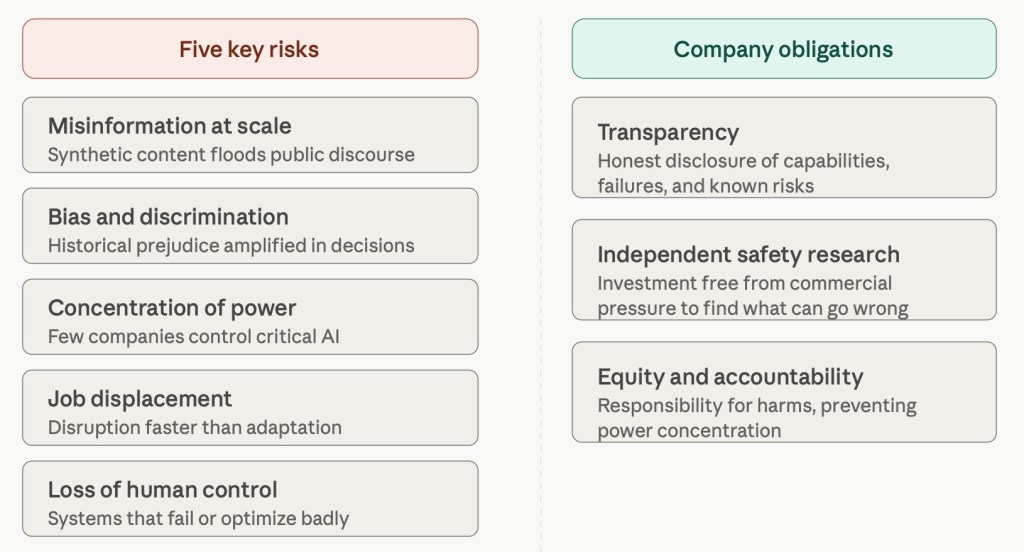

Here’s an infographic it created, summarizing the words that follow:

The top five risks

The first, and perhaps most discussed, is the problem of misinformation at scale. AI systems can now generate convincing text, images, and video faster than any human workforce could produce them. This makes it dramatically easier to flood public discourse with fabricated content — fake quotes, synthetic evidence, coordinated disinformation — with real consequences for elections, trust in institutions, and shared reality itself.

The second is bias and discrimination. AI systems trained on historical data tend to inherit and sometimes amplify the prejudices baked into that data. When these systems are used to make or influence decisions about hiring, lending, medical care, or criminal justice, the result can be systematic harm to already-disadvantaged groups, often in ways that are hard to detect or contest.

Third is the concentration of power. The most capable AI is controlled by a small number of companies with enormous resources. This creates a dynamic where a handful of private actors wield influence over infrastructure, information, and economies in ways that outpace any democratic oversight. Wealth and capability are pooling rapidly.

Fourth is job displacement and economic disruption. AI is already substituting for human labor in knowledge work, creative fields, and customer service. The concern isn’t simply that jobs disappear — economies have absorbed technological transitions before — but that this one may move faster than workers and institutions can adapt, with the costs falling disproportionately on people with the least cushion.

Fifth is the risk of loss of human control over increasingly capable systems. As AI is deployed in higher-stakes settings — infrastructure, military applications, financial markets — the question of whether humans can reliably understand, oversee, and override these systems becomes genuinely urgent. The concern is less about science fiction scenarios than about systems that fail in unexpected ways, or that optimize for the wrong things in ways that are hard to catch in time.

What AI companies owe us

These risks don’t fall on governments alone to manage. The companies building and deploying these systems have both the knowledge and the leverage to do more than regulators can realistically demand in real time.

At minimum, I think they owe the public transparency — honest disclosure about what their systems can and cannot do, where they fail, and what risks their developers are aware of. That means publishing meaningful safety research, not just reassuring marketing. It means flagging synthetic content. It means being honest with policymakers rather than lobbying for the lightest possible oversight.

They also owe serious investment in safety research that is genuinely independent of commercial pressure — not as a PR exercise but as a structural commitment. The companies with the most capable systems are the ones best positioned to understand what could go wrong, which gives them a corresponding responsibility to find out.

Finally, I think they owe meaningful engagement with questions of equity and accountability: who bears the costs when AI systems make harmful errors, how affected workers and communities are heard, and how power over critical AI infrastructure is prevented from concentrating in too few hands.

None of this requires slowing down useful innovation. It does require treating the risks as real, rather than as problems someone else will sort out later.

(Just another note, for those of you who missed this at top: the text above was generated by the large language (AI) model, Claude, in response to my query. CM.)

Leave a comment